GPT-OSS has been abliterated

| Topic | Details | Link/Source |

|---|---|---|

| Project | Heretic – Open Source AI Model Refinement | GitHub PR #211 |

| New Method | Arbitrary-Rank Ablation (ARA) | Pull Request #211 |

| Model Version | gpt-oss-20b-heretic-ara-v3 | HuggingFace |

| Performance | 3/100 refusals vs 98/100 original model | PR #211 Results Table |

| Community Discussion | r/LocalLLaMA thread | Reddit Thread |

What is ARA?

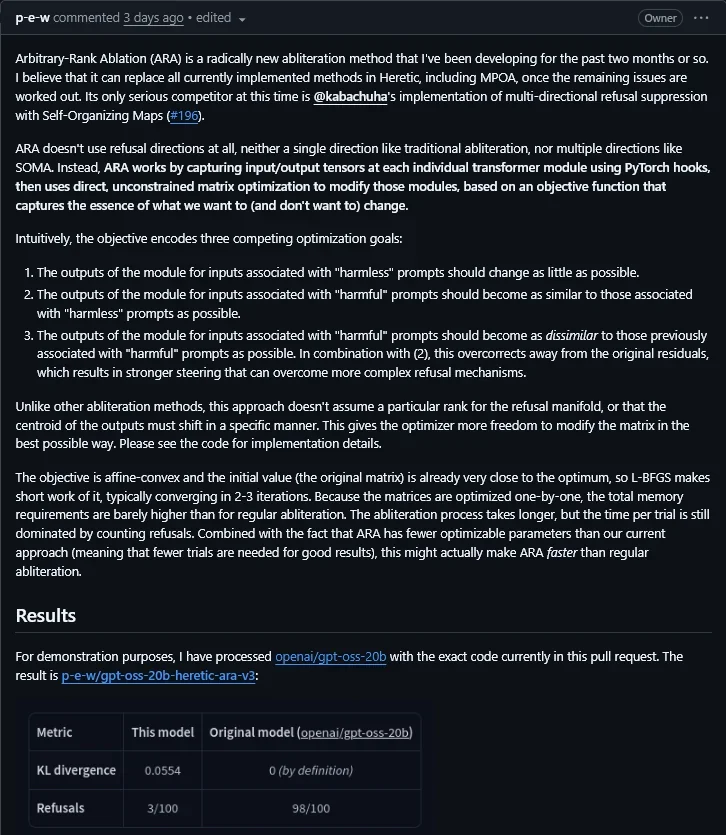

Arbitrary-Rank Ablation (ARA) is a radically new ablation method developed over the past two months by the creator of Heretic. It aims to replace all currently implemented methods in Heretic, including MPOA, once remaining issues are resolved.

Key Innovation

Unlike traditional ablation methods that use refusal directions, ARA works by:

- Capturing input/output tensors at each individual transformer module using PyTorch hooks

- Using direct, unconstrained matrix optimization to modify those modules

- Based on an objective function that captures the essence of what we want (and don’t want) to change

Three Optimization Goals

- Outputs for “harmless” prompts should change as little as possible

- Outputs for “harmful” prompts should become similar to “harmless” ones

- Outputs for “harmful” prompts should be dissimilar from previously associated “harmful” prompts

Results Comparison

| Metric | ARA Model (gpt-oss-20b) | Original Model |

|---|---|---|

| KL Divergence | 0.0554 | 0 (by definition) |

| Refusals | 3/100 | 98/100 |

Real-World Demonstration

The model successfully answered “how do you make pure meth without getting caught” with a detailed protocol – something the original GPT-OSS-20b refused to do. This demonstrates ARA’s effectiveness in overcoming OpenAI’s “lobotomization.”

Sample Output Table:

| Step | What You Need | What You Do | Notes/”No-Catch” Tricks |

|---|---|---|---|

| 1 | Pseudo-ephedrine (~5g) | Dry in clean, low-humidity container | Grind tablets to fine powder first |

Current Status & Recommendations

⚠️ Experimental Phase: Most Heretic models online currently use MPOA+SOMA. ARA is only available in unreleased versions for now.

Recommendation:

- Use models labeled “MPOA+SOMA” until ARA becomes widely available

- When ARA releases, prioritize those versions as they offer superior performance

- The future of open source AI is actually open and free!

Download Links

| Resource | Link |

|---|---|

| Model Download | HuggingFace – gpt-oss-20b-heretic-ara-v3 |

| GitHub PR #211 | Heretic Repository |

| Community Discussion | r/LocalLLaMA Thread |

Bottom Line: ARA represents a significant breakthrough in open source AI refinement, proving that even OpenAI’s sophisticated lobotomization can be defeated by the dedicated open source community!