Prospective Configuration (PConfig)

Recently, I stumbled upon a fascinating study that challenged everything I thought I knew about how artificial intelligence learns. For decades, we’ve relied on an algorithm called backpropagation to train neural networks. It’s the engine behind most modern AI, but as I dug deeper into its mechanics, I realized there might be a fundamental flaw in how it works

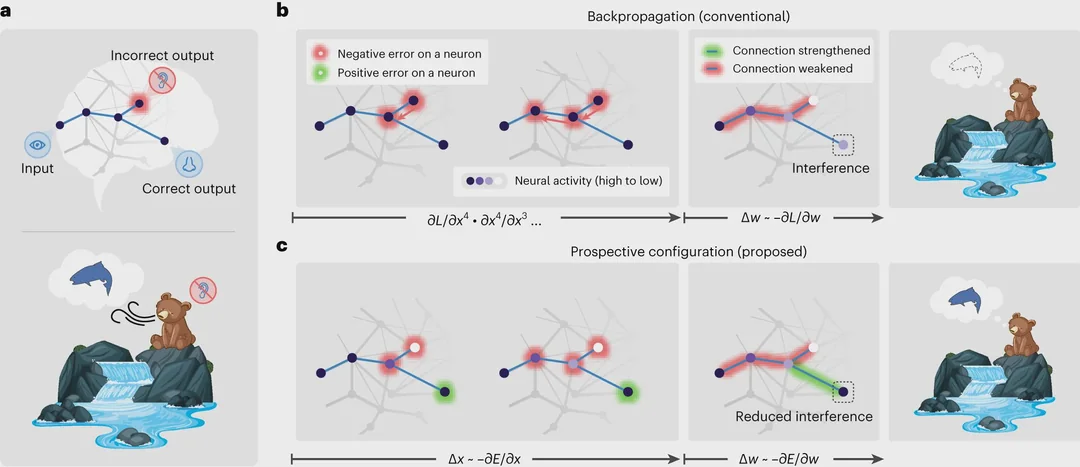

A group of researchers recently studied this core mechanism and revealed a major problem: backpropagation modifies network connections too aggressively. This leads to wasted data samples and “catastrophic interference,” where new knowledge disrupts old facts.

I was surprised to find that there is actually a solution already in the making, called Prospective Configuration (PConfig). Today, I want to share what I found about this shift in architecture and why it matters for your understanding of AI.

The Current Algorithm: Backpropagation Explained

To understand the new discovery, we first have to look at the baseline. Backpropagation has been THE learning algorithm for deep learning for decades. To put it simply, the network makes a prediction, compares it to the correct answer, and calculates an “error.” Then, the network adjusts millions of tiny knobs (the connections or weights) to reduce that error.

However, as I read through the details, the inefficiency became clear. The algorithm constantly has to overcorrect its own mistakes. It wastes samples from the training set doing so because it explores the space of configurations blindly.

It’s like trying to tune a complex instrument by tightening every string at once without knowing how they interact. You end up wasting time correcting errors that were created by your previous corrections.

The Robotic Arm Analogy

To visualize this drawback, I found an analogy in the study that really clicked for me. Imagine a robotic arm where several screws control the wrist, fingers, and hand angle. We want the arm to reach a specific position. There are two ways to achieve this.

The First Approach (Backpropagation): You turn the screws one by one until the arm eventually ends up in the right place. But turning one screw often messes up what the others just did. So you keep correcting again and again, sometimes overcorrecting and making the situation worse, until you get it just right.

This is exactly what backprop does. It explores the space of weight configurations to find the best prediction. Since layers are interconnected, adjusting one connection might interfere with previous adjustments. Thus, we end up WASTING SAMPLES due to having to autocorrect on-the-fly.

Enter Prospective Configuration (PConfig)

The study observes that a second approach exists, which they call “Prospective Configuration.” In this method, you simply move the arm by force to the desired position, and then tighten the screws so that the arm stays there. This eliminates the trial-and-error work of messing with screws one by one.

PConfig implicitly uses this logic in energy-based models such as predictive coding networks and Hopfield networks. Those models first manually adjust their internal activity (the output of neurons). Doing so allows PConfig to “see” what is needed for the model to make the right prediction. Only then, if necessary, are the weights adjusted to keep the model stable at that state.

Key Advantages of PConfig

Why should you care about this shift in architecture? There are three distinct benefits that make PConfig a serious contender for the future of AI.

- More Sample Efficient: Fewer training examples are wasted tweaking connections. The adjustments do what we want them to do on the first try, unlike backpropagation.

- Promising for Continual Learning: PConfig reduces the number of tweaks done to the model. Weights are modified only when necessary, and changes are less pronounced. This is crucial because continual learning (CL) is difficult; each time weights change, the model risks forgetting basic facts. New knowledge “catastrophically interferes” with existing knowledge, but PConfig keeps changes minimal.

- Biologically Plausible: PConfig is compatible with behavior observed in diverse human and rat learning experiments. It mirrors how biological brains might actually process information more closely than current digital simulations.

Why It’s Still a Research Problem

Despite these advantages, there is a catch. Before modifying the weights, PConfig first has to adjust the internal activity of the network (the output of all neurons). Essentially, it searches for the right configuration of outputs before figuring out weight updates.

This search is a slow optimization process based on minimizing error (“energy”). It relies on letting opposing constraints pull on the system until it settles into the correct internal state. Thus, it usually requires many steps, which makes it impractical for modern GPUs compared to the streamlined forward-backward pass of backpropagation.

Ideally, the best hardware for PConfig configuration would be analog hardware, especially those with innate equilibrium dynamics like springs or oscillators. They allow the model to perform the search almost instantaneously by leveraging laws of physics. Unfortunately, those systems aren’t quite ready yet, so we are left adapting PConfig to fit on current digital hardware.

Conclusion and Next Steps

The shift from backpropagation to prospective configuration represents a fundamental change in how machines learn. By front-loading the hard work into finding the right activations rather than dumping complexity into weight updates, we can achieve more stable and efficient models.

For those interested in diving deeper into the mechanics behind this discovery, I recommend reviewing the original study published in Nature Neuroscience here. Additionally, for a visual breakdown of these concepts, you can watch the explanatory video version on YouTube.

If you want to understand more about how this fits into current hardware limitations, I suggest reading up on analog computing advancements that could make PConfig viable in the future. As we move forward, keep an eye on analog computing developments. Recent advancements in neuromorphic chips may soon make PConfig viable for real-time applications, potentially revolutionizing how local AI operates on your devices.